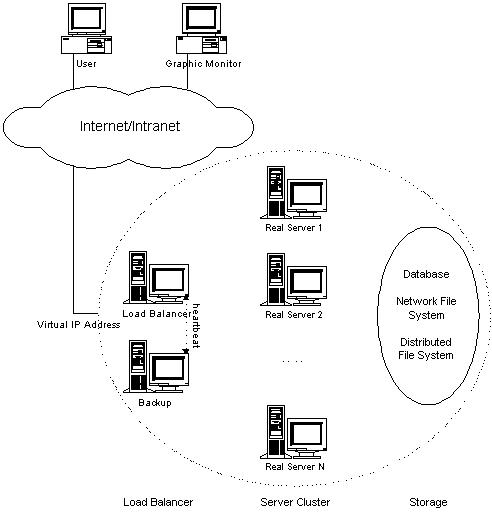

Linux Virtual Server, LVS is an advanced load balancing solution for Linux systems. It is an open source project started by Wensong Zhang in May 1998. The mission of the project is to build a high-performance and highly available server for Linux using clustering technology, which provides good scalability, reliability and serviceability.

Linux Virtual Server, LVS is an advanced load balancing solution for Linux systems. It is an open source project started by Wensong Zhang in May 1998. The mission of the project is to build a high-performance and highly available server for Linux using clustering technology, which provides good scalability, reliability and serviceability.

The major work of the LVS project is now to develop advanced IP load balancing software (IPVS), application-level load balancing software (KTCPVS), and cluster management components.

In computing, load balancing is a technique used to spread work load among many processes, computers, networks, disks or other resources, so that no single resource is overloaded.

Example of Network balancing:

Layer-2 Load Balancing

Layer-2 load balancing, aka link aggregation, port aggregation, etherchannel, or gigabit etherchannel port bundling is to bond two or more links into a single, higher-bandwidth logical link. Aggregated links also provide redundancy and fault tolerance if each of the aggregated links follows a different physical path. Link aggregation may be used to improve access to public networks by aggregating modem links or digital lines. Link aggregation may also be used in the enterprise network to build multigigabit backbone links between Gigabit Ethernet switches. See also NIC teaming or Link Aggregation Control Protocol(LACP)

The Linux kernel has the Linux bonding driver, which can aggregate multiple links for higher throughput or fault tolerance.

Layer-4 load balancing is to distribute requests to the servers at transport layer, such as TCP, UDP and SCTP transport protocol. The load balancer distributes network connections from clients who know a single IP address for a service, to a set of servers that actually perform the work. Since connection must be established between client and server in connection-oriented transport before sending the request content, the load balancer usually selects a server without looking at the content of the request.

IPVS is an implementation of layer-4 load balancing for the Linux kernel, and has been ported to FreeBSD recently.

Layer-7 Load Balancing

Layer-7 load balancing, also known as application-level load balancing, is to parse requests in application layer and distribute requests to servers based on different types of request contents, so that it can provide quality of service requirements for different types of contents and improve overall cluster performance. The overhead of parsing requests in application layer is high, thus its scalability is limited, compared to layer-4 load balancing.

KTCPVS is an implementation of layer-7 load balancing for the Linux kernel. With the appropriate modules, the Apache, Lighttpd and nginx web servers can also provide layer-7 load balancing as a reverse proxy.

Ipvs in practice

IPVS (IP Virtual Server) implements transport-layer load balancing inside the Linux kernel, so called Layer-4 switching. IPVS running on a host acts as a load balancer at the front of a cluster of real servers, it can direct requests for TCP/UDP based services to the real servers, and makes services of the real servers to appear as a virtual service on a single IP address.

IPVS it’s included in all recent Linux kernel so nothing must be done, to install the user-space component use your package manager and install it, for example in Ubuntu:

aptitude install ipvsadm

The ipvsadm package does not have graphical interfaces and follows the principle unix, do one thing and do it well, and so his goal is only that of provide a tool to manage a layer-4 network balancer.

Methods of balancing used by LVS

With ipvsadm you can select a method to forward packets from the balancing box to the real servers, the main methods are:

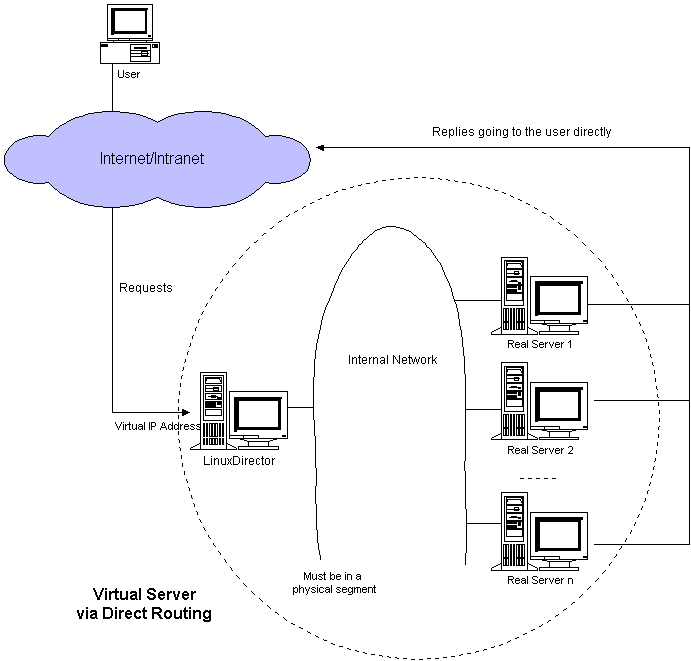

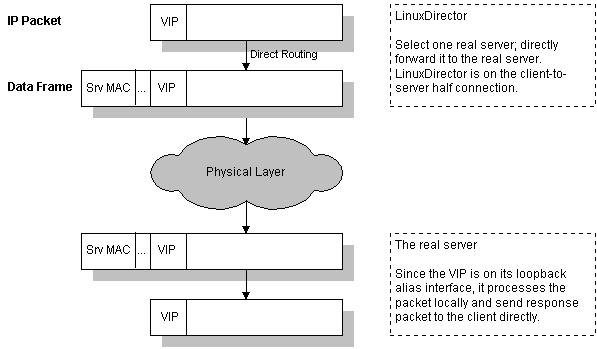

- LVS-DR (direct routing) where the MAC addresses on the packet are changed and the packet forwarded to the realserver

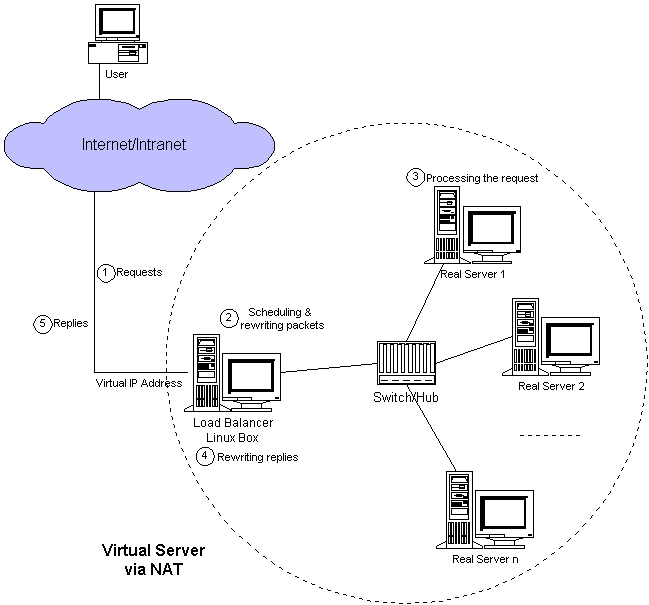

- LVS-NAT based on network address translation (NAT)

- LVS-Tun (tunneling) where the packet is IPIP encapsulated and forwarded to the realserver.

LVS Cluster components and definitions

Client: a machine (any O.S) with an http client (any browser is ok).

Director: This is the machine that redistribute the load among the “real-servers”. The director is basically a router, with routing tables set up for the LVS function. These tables allow the director to forward packets to realservers for services that are being LVS’ed.

Real-servers: These are the machines with the running service.

VIP: The director presents an IP called the Virtual IP (VIP) to clients. When a

client connects to the VIP, the director forwards the client’s packets to one

particular realserver for the duration of the client’s connection to the LVS. This

connection is chosen and managed by the director. The LVS presents one IP on

the director (the virtual IP, VIP) to clients.

LVS-DR is based on IBM’s NetDispatcher. The NetDispatcher sits in front of a set of webservers, which appear as one webserver to the clients. The NetDispatcher served http for the Atlanta and the Sydney Olympic games and for the chess match between Kasparov and Deep Blue.

When the packet CIP->VIP arrives at the director it is put into the OUTPUT chain as a layer 2 packet with dest = MAC address of the realserver. This bypasses the routing problem of a packet with dest = VIP, where the VIP is local to the director. When the packet arrives at the realserver, which finds the packet addressed to an IP local to the realserver (the VIP).

So you configure on the director the VIP on a “real” network card, and on the real server you configure the VIP on the loopback

LVS-NAT

LVS-NAT is based on cisco’s LocalDirector.

This method was used for the first LVS. If you want to set up a test LVS, this requires no modification of the realservers and is still probably the simplest setup.

With LVS-NAT, the incoming packets are rewritten by the director changing the dst_addr from the VIP to the address of one of the realservers and then forwarded to the realserver. The replies from the realserver are sent to the director where they are rewritten and returned to the client with the source address changed from the RIP to the VIP.

LVS-Tun Intro

LVS-Tun is an LVS original. It is based on LVS-DR. The LVS code encapsulates the original packet (CIP->VIP) inside an ipip packet of DIP->RIP, which is then put into the OUTPUT chain, where it is routed to the realserver. The realserver receives the packet on a tunl0 device and decapsulates the ipip packet, revealing the original CIP->VIP packet.

This is the less used method used in production environment.

Basic Setup with ipvsadm

This is an example of ipvsadm commands that you can use with 1 director that loadbalance 1 VIP address ($VIP) on 3 realserver ($REAL1,2,3).

As first thing we add an address to the load balancing with the command:

#clear ipvsadm table

/sbin/ipvsadm -C

#installing LVS services with ipvsadm

#add http to VIP with weighted round robin scheduling

#Create the Virtual Server (http)

/sbin/ipvsadm -A -t $VIP:http -s wrr

Now we add the 3 realservers in LVS-DR mode:

#forward http to realserver using direct routing with weight 1

# add all realserver you want

/sbin/ipvsadm -a -t $VIP:http -r $REAL1 -g -w 1

/sbin/ipvsadm -a -t $VIP:http -r $REAL2 -g -w 1

/sbin/ipvsadm -a -t $VIP:http -r $REAL3 -g -w 1

For this configuration to work you need to configure the $VIP on the 3 realservers, but other than that you are done, you have a working load balancer.

Popular Posts:

- None Found

[…] How to build a Linux cluster using the Linux virtual server kernel … Linux Virtual Server Logo Page Linux.com :: Building a Linux virtual server Introduction to LVS, Linux Virtual Server – LinuxariaDescription : Linux Virtual Server, LVS is an advanced load balancing solution for Linux systems.http://linuxaria.com/howto/int .. […]