Ovvero zip VS gzip VS bzip2 VS xz

In un precedente articolo riguardante il programma tar ho accennato a bzip2 e gzip come opzioni per la compressione di un archivio tar (e mi sono scordato xz).

Per fare ammenda oggi vi presenterò i principali metodi per comprimere i file e farò qualche test per vedere come si comportano.

Prenderò in considerazione zip,gzip,bzip2 e xv, non testerò compress un altro programma di compressione presente nei sistemi linux ma ormai datato e superato come performance dagli altri programmi.

Ma come prima cosa una panoramica di questi 4 metodi / programmi di compressione

Zip

Lo ZIP è un formato di compressione dei dati molto diffuso nei computer IBM-PC con sistemi operativi Microsoft e supportato di default nei computer Apple con sistema operativo Mac OS X. Supporta vari algoritmi di compressione, uno dei quali è basato su una variante dell’algoritmo LZW. Ogni file viene compresso separatamente, il che permette di estrarre rapidamente i singoli file (talvolta anche da file parzialmente danneggiati) a discapito della compressione complessiva. Un file Zip si riconosce grazie all’header “PK” (codifica ascii)

Tale formato è stato creato nel 1989 da Phil Katz per PKZIP, come alternativa al precedente formato di compressione ARC di Thom Henderson.

Il formato originale supportava un debole schema di protezione con password (cifratura simmetrica), attualmente considerato inadeguato. Versioni più recenti dei programmi che gestiscono questo formato spesso supportano algoritmi più recenti e funzionali (es: AES).

Gzip

gzip è un programma libero per la compressione dei dati. Il suo nome è la contrazione di GNU zip. Fu inizialmente creato da Jean-Loup Gailly e Mark Adler. La versione 0.1 fu rilasciata pubblicamente il 31 ottobre 1992. La versione 1.0 vide invece la luce nel febbraio del 1993.

Normalmente ogni file verrà rimpiazzato da uno con l’estensione .gz, mantenendo le stesse proprietà, date d’accesso e di modifica (l’estensione predefinita è gz per Linux o OpenVMS, z per MS-DOS, OS/2 FAT, Windows NT FAT e Atari).

Gzip usa l’algoritmo di Lempel-Ziv usato in zip e PKZIP. L’ammontare della compressione ottenuta dipende dalla dimensione dell’ingresso e dalla distribuzione delle sotto-stringhe comuni. Tipicamente, testi come codici sorgenti o Inglesi sono ridotti del 60-70%. La compressione è generalmente molto migliore di quella ottenibile da LZW (usato in compress), codifica di Huffman (usata in pack), o codifica di Huffman adattativa (compact).

bzip2

bzip2 è un algoritmo di compressione dati libero da brevetti e open source. L’ultima versione, la 1.0.6, è stata rilasciata il 20 settembre 2010.

Sviluppato da Julian Seward, venne rilasciato pubblicamente nel luglio del 1996 (versione 0.15). La sua popolarità aumentò in poco tempo in quanto la compressione era elevata e stabile: la versione 1.0 è stata rilasciata nel 2000.

bzip2 produce con la maggior parte dei casi file compressi molto piccoli rispetto a gzip o ZIP, tuttavia ne “paga” in prestazioni essendo leggermente più lento.

Ciò nonostante, con il costante effetto della legge di Moore che rende il tempo-macchina sempre inferiore e meno importante, i metodi di elevata compressione come bzip2 sono diventati più popolari. Effettivamente, secondo l’autore, bzip2 contiene all’interno dal dieci al quindici percento del miglior algoritmo di compressione attualmente conosciuto

XZ Utils

XZ Utils è un software libero e di uso generico di compressione dei dati, con rapporto di compressione elevato. XZ Utils sono state scritte per sistemi POSIX-like, ma lavorano anche su alcuni sistemi non-così-tanto POSIX. Utils XZ è il successore di Utils LZMA.

Il cuore del codice di compressione di XZ Utils è basato sul SDK di LZMA , ma è stato modificato un bel po’ per essere utilizzati su Utils XZ. L’algoritmo di compressione primario è attualmente LZMA2, che viene utilizzato all’interno del formato .xz . Con file tipici, XZ Utils crea un output del 30% più piccolo rispetto a gzip ed un output del 15% più piccolo di bzip2.

XZ Utils consiste in diversi componenti:

* liblzma è una libreria di compressione con API simili a quelle di zlib.

* xz è un tool a linea di comando con sintassi sisimile a quello di gzip.

* xzdec è un tool solo di decompressione più piccolo del tool xz che ha tutte le caratteristiche.

* Un set di shell scripts (xzgrep, xzdiff, etc.) sono stati adattati da gzip per facilitare la visione, il “grepping”, e la comparazione di file compressi.

* Emulazione dei comandi da linea di comando LZMA Utils facilita la traduzione da LZMA Utils a XZ Utils.

Benchmark

Ho usato sempre i comandi base senza aggiungere opzioni, quindi dato nomefile il file da testare ho usato:

zip nomefile.zip nomefile

gzip nomefile

bzip2 nomefile

xz nomefile

Testo

Test fatti con il file di testo Bibbia in Basic English (bbe) il file non compresso occupa 4467663 bytes (4 MB).

questi i risultati:

4467663 bbe

1282842 bbe.zip

1282708 bbe.gz

938552 bbe.xz

879807 bbe.bz2

Con un file di testo un po più grosso 41 MB i risultati sono:

41M big2.txt

15M big2.txt.zip

15M big2.txt.gz

11M big2.txt.bz2

3.6M big2.txt.xz

Ulteriore test con opzioni standard e file da 64 MB:

64M 65427K big3.txt

26M 25763K big3.txt.zip

26M 25763K big3.txt.gz

24M 23816K big3.txt.bz2

2.2M 2237K big3.txt.xz

Ulteriore test, come da suggerimento nei commenti ho utilizzato il flag -9 (max compression), con questi comandi:

zip -9 big3.txt.zip big3.txt

gzip -9 -c big3.txt >> big3.txt.gz

bzip2 -9 -c big3.txt >> big3.txt.bz2

xz -e -9 -c big3.txt >> big3.txt.xz

E questi sono i risultati, dimensione e tipo di file:

64M 65427K big3.txt

25M 25590K big3.txt.zip

25M 25590K big3.txt.gz

24M 23816K big3.txt.bz2

2.2M 2218K big3.txt.xz

Avi

Ho usato un file da 700 MB con encoding XviD, i risultati di compressione sono stati (come previsto) abbastanza scarsi

700 MB file.avi

694 MB file.avi.zip

694 MB file.avi.gz

694 MB file.avi.xz

693 MB file.avi.bz2

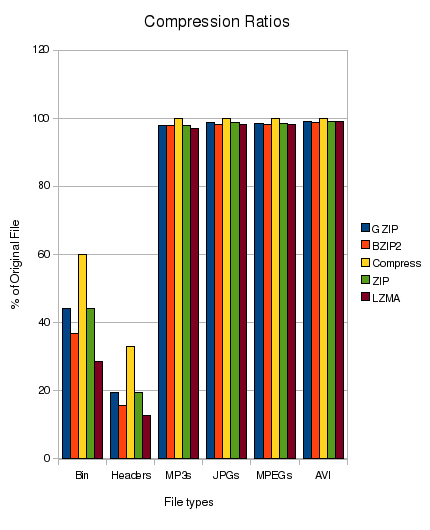

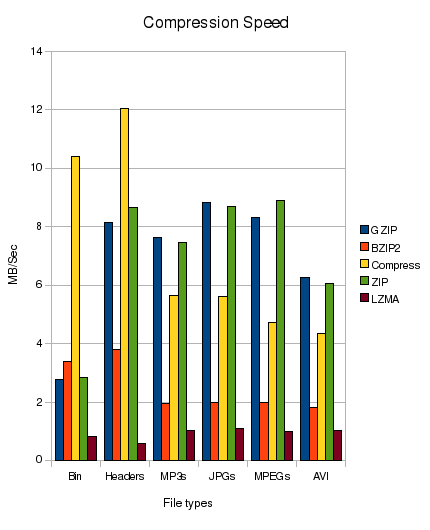

Su un altro sito, ho trovato test molto simili che includo per completezza, i test sono stati realizzati con:

- Binaries 6,092,800 Bytes taken from my /usr/bin director

- Text Files 43,100,160 Bytes taken from kernel source at /usr/src/linux-headers-2.6.28-15

- MP3s 191,283,200 Bytes, a random selection of MP3s

- JPEGs 266803200 Bytes, a random selection of JPEG photos

- MPEG 432,240,640 Bytes, a random MPEG encoded video

- AVI 734,627,840 Bytes, a random AVI encoded video

Ho creato un file tar di ogni categoria in modo che ogni prova venga eseguita solo su un file (Per quanto ne so, fare il tar dei file non influirà sul test di compressione). Ogni test è stato eseguito da uno script 10 volte ed è stata presa la media per rendere i risultati il più equi possibile.

Conclusioni

Dai test empirici fatti, e da quelli osservati in altri siti mi sento di consigliarvi LZMA (aka XZ), è quello un più lento e che consuma più cicli CPU, ma è anche quello che ottiene un maggiore rapporti di compressione sui file di testo, che tipicamente sono quelli che interessa comprimere.

Aggiungete che è completamente integrato a tar (opzione J) ed è facilmente integrabile con logrotate

D’altra parte se la CPU o il tempo sono un problema si può utilizzare gzip che è anche più utilizzato e conosciuto (se si deve inviare il nostro archivio da qualche parte).

Popular Posts:

- None Found

I would like to thank you for you most interesting blog.

Occasionally, I try to read the Italian one…

Tanti auguri.

for bzip2, gzip and xz, you need to do the tests again with the -9 or –best option enabled. Since you seem to only be concerned about the compression ratio, and not the time it takes, it is only fair to use the functions internal to the compressors that “they” deem best.

Also, tar’s option j is for bzip2 on my ubuntu 10.04LTS system. What system are you using?

Finally, you have typo’d xz as xv in the second paragraph.

Thanks for the feedback.

Typo corrected, the -j (lowercase) is for bzip2 and -J (uppercase) is for xz, at least this work on Ubuntu 10.10.

I’m redoing now the test on txt files with your suggested options and i’ll publish the results later.

Thanks

An interesting write up, unfortunately the graphs lack context and meaning could you clarify a few points:

Are the speeds in compressed or uncompressed data?

Do the times include iowait/disk access?

Which average was taken (mean)?

What kind of error is there in the measurements (e.g the compression ratios for media all look like they would be the same if error bars were added)?

Hello,

Lot of technical question, but i dont’ have the answers :), the graph are taken from another article http://blog.terzza.com/linux-compression-comparison-gzip-vs-bzip2-vs-lzma-vs-zip-vs-compress/ try check with the author.

If you like these benchmarks check also: http://tukaani.org/lzma/benchmarks.html

What about 7z ?

I don’t use 7z because usualyl you don’t find it in the standard packages installed by most distro and more important is not integrated with tar.

Told that, i must say that 7z (in my test) gave the higher compression ratio, i used with the text file of 64MB the command:

7zr a -t7z -m0=lzma -mx=9 -mfb=64 -md=32m -ms=on big3.txt.7z big3.txt

And i got 2.2M big3.txt.7z that is the same result of XZ.

Still, due to integration with tar, i’d preferer XZ as standard compression tool.

Thanks for the tips.

Nice comparison, but it would have been a bit better if the same file was used for default compression and -9 compression. This would have made the results comparable.

Big2.txt was generated randomly and i deleted it at the end of the test, so i was not able to do the same test on it.

But i’m including now in the article the results of standard compression (no option) for the big3.txt file.

No RAR?

I don’t use rar for the same reason of 7zip, no integration with tar, but i’ve doen a test and on the text file of 64 MB i got this result: 21579K big3.txt.rar

That’s better than gzip and bzip2, so if you are used to rar it’s quiet good on compression, but still not as good as xz and 7z

Bye

The AV file formats, .mp3, .jpg, .etc are compressed by their coding algorithm. For meaningful results, check compression of raw images or sound files. Then compare the “compressed” versions with the encoded versions for size. I predict that the compression ratios will be similar. However, not all the AV codings are “lossless” (reversible).

“..no integration with tar..”

please, run that:

tar –help | grep [-]I

#tar –help | grep [-]I

-I, –use-compress-program=PROG

Ok, but still too long for the lazy 😛